The DRIVE Series - April 2026

Discoveries, Resources, Ideas, Values, Experiments

Welcome to the DRIVE series, complete with a gimmicky name for my pleasure. At the end of every month, I’ll provide some combination of discoveries, resources, ideas, values, or experiments involving AI, technology, and healthcare. Let’s go!

If March was busy, April was even busier. So I didn’t write any posts. However, it was creatively fruitful so I have a bunch of cool projects I’ll be releasing in the next few months. Here are my favorite links and articles in no particular order.

Project Glasswing

By Anthropic

Assessing Claude Mythos Preview’s Cybersecurity Capabilities

Red Anthropic

Anthropic’s New AI Model Sets Off Global Alarms

By Paul Mozur and Adam Satariano - The New York Times

We start with the most important news of the month. Anthropic’s announcement that they have developed a general-purpose, unreleased frontier AI model that has such high coding capability, that it can discover and exploit software security vulnerabilities. They call the model Mythos and claim that it has already discovered thousands of security vulnerabilities in “every major operating system and web browser.”

Some of these vulnerabilities are called zero-day vulnerabilities, or software flaws that software developers were unaware of, that were decades old. Anthropic has not released the model for widespread use, but has instead convened a consortium of companies that include Apple, Google, Amazon, JPMorganChase, NVIDIA, Microsoft, Linux, and others to use the model on their own systems to protect themselves before models like these become more widespread. They call the initiative Project Glasswing. Unfortunately, I have not seen any information about a major healthcare company or hospital being involved.

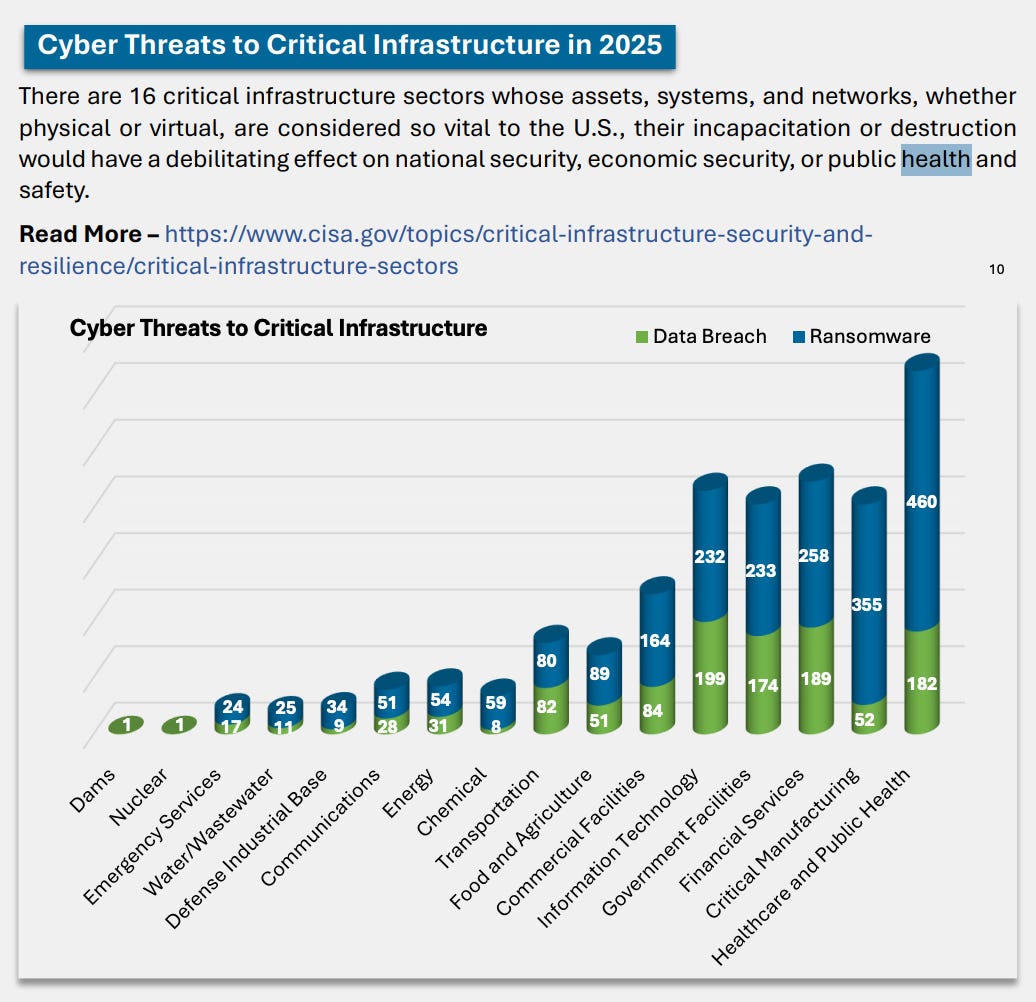

Obviously this is a big deal in all industries, but especially in healthcare, where the security systems tend to be fragmented, aging, and prone to hacks and exploits. In fact, the FBI recently reported that the healthcare industry was the top target for ransomware and other cyberthreats in 2025. You can check out their 2025 Internet Crime Report here.

The Labor Department Wants to Teach You To Use AI. Here’s What We Found

- By Huo Jungnan - NPR

Make America AI Ready - U.S. Department of Labor

CS309: The Essentials of AI for Life and Society - University of Texas

This NPR article introduced me to a couple of AI literacy courses for the masses by both the U.S. Department of Labor and the University of Texas. On preliminary review, they seem helpful and educational, especially the one from the University of Texas which is a 3-credit course with video lectures that they have made freely available.

Pangram

I recently discovered Pangram, which is a tool to help detect AI-generated content.

We Don’t Really Know How AI Works. That’s a Problem.

By Oliver Whang - The New York Times

The Urgency of Interpretability - By Dario Amodei - CEO of Anthropic

Interpretability is a concept in compute science that suggests we should treat AI more as a natural phenomenon rather than a human technology. Essentially, many AI models are black boxes — we do not necessarily know how they work or how they derived their answers because their output is dependent on millions of mathematical calculations. In science and medicine, this is a problem because how can we test a suggested treatment or cure if we cannot validate it with the scientific method? Unfortunately, the AI models cannot explain how they derived their answers. Researchers have found that the models make up explanations. Understanding the limitations is essential, especially if we want to use it in medical research and clinical application.

I Uploaded My Bloodwork to AI. Am I oversharing?

By Nicole Nguyen - The Wall Street Journal

The author explores different AI chatbots and the insights they give her when she shares her medical information. While this is tempting and can potentially be educational, the chatbots do not offer healthcare-level security and privacy protections and we also do not know how this information may be used by future versions of the technology.

The ChatGPT Symptom Spiral

By Sage Lazzaro - The Atlantic

Another article warning about the dangers of sharing your medical information with AI chatbots. This time, the fear is the crippling health anxiety the AI chatbot might induce if someone is looking for answers about their health without proper perspective.

Why Scientists are Nervous About Fungi

By Gabrielle Emanuel - NPR

Antimicrobial resistance is worsening and we are not developing drugs to treat resistant bacteria and fungi fast enough.

Under 40 and Diagnosed With Cancer

By Nina Agrawal - The New York Times

While the longevity boom seems to be receiving all the hype, there is a troubling trend of cancer becoming more common in younger people (those under 50) in the past few decades. This piece places human faces and stories to the statistics. It is a reminder that as much as we try, we do now always have control.

You Can Order Your Own Blood Work Now. Interpreting the Results is Another Story

By Kate Cunningham - NPR

With increasing use of AI chatbots, the cultural obsession over youth and longevity, and trends like DIY medicine, more and more people are getting self-tested. One of the main attractions is transparency in price. One of the main challenges is interpreting their own results.

A ‘Barbaric’ Problem in American Hospitals is Only Getting Bigger

By Elisabeth Rosenthal - The Atlantic

Here’s news every healthcare professional and fans of The Pitt knows: the problem of emergency department boarding, or patients waiting in the ER for an inpatient bed to become available, is getting worse. Unfortunately it will continue getting worse as the shortage of healthcare clinicians worsens, the population ages, and the masses become sicker.

I Saved $800 on My Medical Bills With an Easy Trick

By Alex Olgin - Slate

The hospital bill that you receive is not the final word. Rather it is the starting point of negotiation.

9 Reasons AI Won’t Take Your Job Yet

By Gary Marcus - Fortune

One of the most thoughtful and measured AI experts is Gary Marcus, an emeritus professor of psychology and neural science at NYU, who reminds us that we shouldn’t get too carried away with AI stealing our jobs.

Economists Once Dismissed the AI Job Threat, But Not Anymore

By Ben Casselman - The New York Times

Some economists were skeptical that AI would flip the labor market on its head. But now they are changing their tune and are recommending that policymakers are not moving fast enough to protect us from the potential loss of millions of jobs.

Who’s the Boss?

By Kate Lindsay - Slate

Get ready for managerial slop. One of the major concerns about AI chatbots is that people are losing their ability to think, to reason, or to create because they are offloading the hard work to the large language models. It is not a good sign when managers and bosses are no longer able to answer their employees’ questions and instead refer them to the chatbots instead.

AI Chatbots

By John Oliver - Last Week Tonight

If you made it this far, thank you and please enjoy!